Think AI is just about picking the right tool? Think again.

Most teams do not fail because they chose the wrong model first. They fail because they skipped the groundwork. An AI readiness assessment helps you see whether your business is actually prepared to use AI in a way that is secure, useful, and worth the investment.

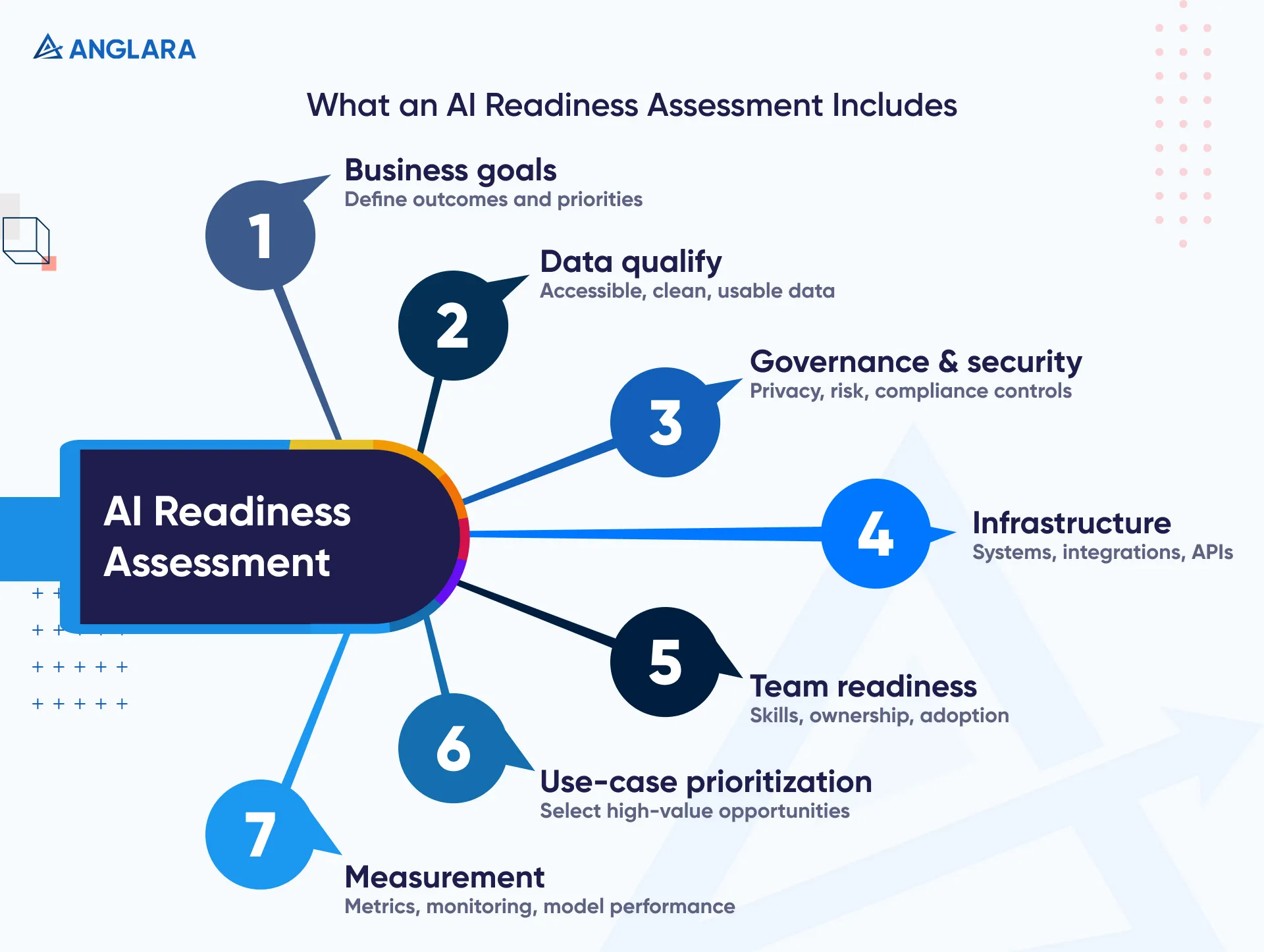

An AI readiness assessment is a structured review of how prepared your business is to adopt and scale AI. In practice, it looks at your business goals, data quality, governance, security, infrastructure, team readiness, and your ability to prioritize high-value use cases. Major frameworks from Microsoft, Google Cloud, Cisco, and NIST all point to the same idea: AI success depends on more than technology alone. It depends on whether your organization has the right foundation to move from experimentation to reliable business outcomes. (Microsoft Learn)

Who This Is For

This guide is for:

- CMOs and RevOps leaders trying to assess whether AI can improve reporting, content workflows, lead handling, or customer support

- CTOs and product owners deciding whether their data and systems are ready for AI-driven features

- Founders who want to avoid wasting money on tools before they know where AI will create real value

- Operations leaders who need a practical way to score readiness before approving implementation work

It is especially useful for teams that already feel pressure to “do something with AI” but are not yet confident about governance, data, or rollout planning.

What Is an AI Readiness Assessment?

In plain English, an AI readiness assessment is a decision tool. It helps you answer one question:

Are we ready to implement AI in a way that is useful, secure, and scalable?

That answer should not come from hype, a vendor demo, or a single workshop. It should come from reviewing the areas that actually affect delivery.

Microsoft’s assessment describes readiness across seven pillars, including business strategy, governance and security, data foundations, culture, infrastructure, and model management. Google Cloud similarly emphasizes data foundations, organizational learning, internal support, and thoughtful pilot selection. Cisco’s assessment also covers strategy, infrastructure, data, talent, operations, and governance. (Microsoft Learn)

So while the labels vary, the pattern is consistent:

- clear business goals

- usable data

- governance and privacy guardrails

- enough infrastructure and integration capacity

- realistic adoption plans

- a shortlist of use cases worth doing first

A good assessment should show both where you are strong and where AI projects are likely to stall.

Why Businesses Need One Before Implementation

Without a readiness assessment, many AI projects start with the wrong question.

Instead of asking, “Which use case gives us the best return with acceptable risk?” teams jump to, “Which model should we use?” or “Which tool should we buy?”

That is backward.

NIST’s AI Risk Management Framework exists because AI creates organizational, technical, and societal risks that need to be managed deliberately. Microsoft and Google both stress that successful adoption depends on aligned strategy, risk controls, and data foundations, not just enthusiasm for AI. (NIST)

This also matches what practitioners complain about in real discussions. On Reddit, people repeatedly point to dirty workflows, poor data access, missing governance, unclear ROI expectations, and a lack of internal skills as the real blockers. In other words, many teams are not tool-poor. They are foundation-poor. (Reddit)

That is why an AI readiness assessment matters. It helps you:

- avoid low-value pilots

- identify blockers early

- align leadership on priorities

- choose use cases that are actually feasible

- move into implementation with fewer surprises

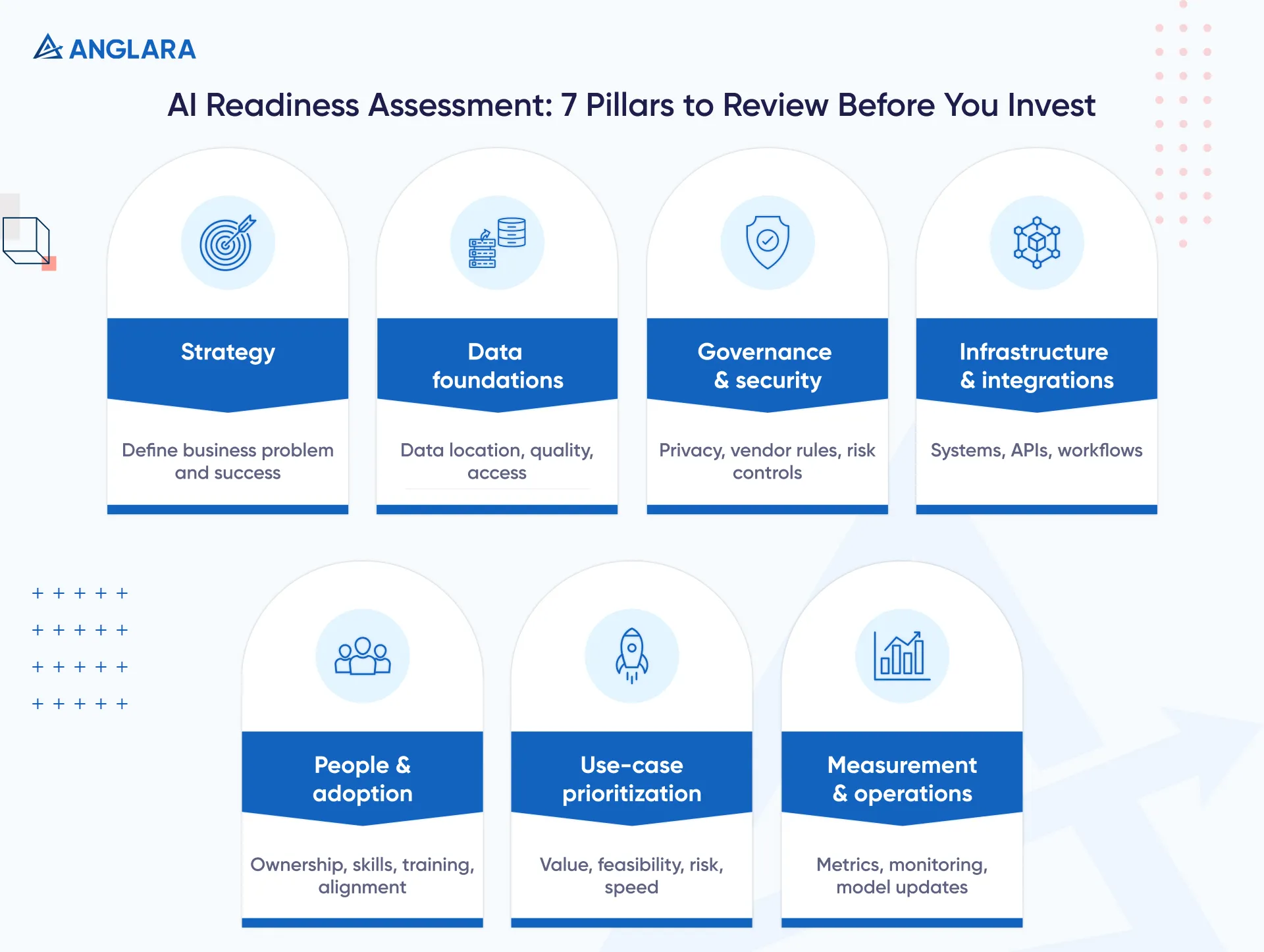

The 7 Areas to Evaluate in an AI Readiness Assessment

Strategy and Business Goals

AI should be tied to a business outcome, not curiosity.

Start with questions like:

- What business problem are we trying to solve?

- What would success look like in 90 days?

- Are we trying to reduce manual effort, improve response speed, increase conversion, or improve decision quality?

- Is this a real priority, or just an executive interest area?

Google Cloud recommends evaluating AI use cases through business value, feasibility, usability, and adoption. That is a strong approach because it keeps the discussion grounded in results, not novelty. (Google Cloud)

If your team cannot clearly define the business outcome, you are not ready to implement. You are still in exploration mode.

Data Foundations

If your data is scattered, inconsistent, or difficult to access, your AI project will struggle.

Google Cloud explicitly says that if your data is not ready for AI, your business is not ready for AI. Microsoft also includes data foundations as one of the core readiness pillars. (Google Cloud)

Look at:

- where your data lives

- how clean it is

- who owns it

- whether it is tagged and structured well enough to support the use case

- how often it changes

- whether access permissions are defined

For example, a support AI assistant may need past tickets, help-center content, product documentation, CRM data, and policy content. If those live in five systems with no ownership model, the issue is not the assistant. The issue is readiness.

Governance, Privacy, and Security

This is one of the biggest areas teams underestimate.

NIST AI RMF is built around managing AI risk with governance and accountability in mind. Microsoft includes governance and security as a dedicated readiness pillar. Cisco also treats governance as a core evaluation area. (NIST)

Your assessment should ask:

- What data can and cannot be used with AI tools?

- Do we have approval rules for external vendors?

- Are prompts, outputs, and logs handled securely?

- Who reviews risk for sensitive use cases?

- What privacy, regulatory, or contractual constraints apply?

This is especially important in healthcare, finance, and customer-support environments where internal staff may already be experimenting with public AI tools.

Infrastructure and Integrations

Many AI ideas fail not because the use case is bad, but because delivery gets messy.

Your assessment should review:

- current cloud and app stack

- API availability

- authentication and permissions

- workflow orchestration tools

- logging and monitoring

- system integration effort

Google’s older AI adoption framework includes themes related to data, automate, and infrastructure maturity. Cisco also calls out infrastructure readiness directly. (Google Services)

If the use case depends on multiple disconnected systems, missing APIs, or brittle manual exports, the readiness score should reflect that.

People, Skills, and Adoption

AI does not get adopted just because it works once.

Google highlights organizational learning and internal support as essential to adoption. Microsoft includes organization and culture in its seven pillars. Practitioners also point out that talent and training gaps get ignored until late in the process. (Google Cloud)

Review:

- who owns the initiative

- whether business and technical teams are aligned

- whether staff know what AI can and cannot do

- how training and rollout will happen

- whether there is resistance or fear around adoption

A technically sound AI workflow that people do not trust will not deliver business value.

Use-Case Prioritization

Not every AI idea should become a project.

A good readiness assessment helps you rank opportunities using filters such as:

- expected business value

- data availability

- implementation complexity

- compliance risk

- ease of adoption

- speed to measurable outcome

This is where teams often uncover a useful truth: the best first AI use case is usually not the flashiest one. It is the one with enough data, enough clarity, and enough business support to prove value quickly.

Measurement and Model Operations

Many teams are excited for launch, but unprepared for what happens after launch.

Microsoft includes model management as a readiness pillar. NIST’s framework also encourages ongoing risk management and review rather than one-time approval. (Microsoft Learn)

Your readiness assessment should cover:

- what metrics matter

- how outputs will be reviewed

- what acceptable accuracy looks like

- who monitors drift, failures, or misuse

- how you handle version changes or vendor changes

This matters whether you are building an internal assistant, a customer-facing bot, or an automated workflow with AI in the loop.

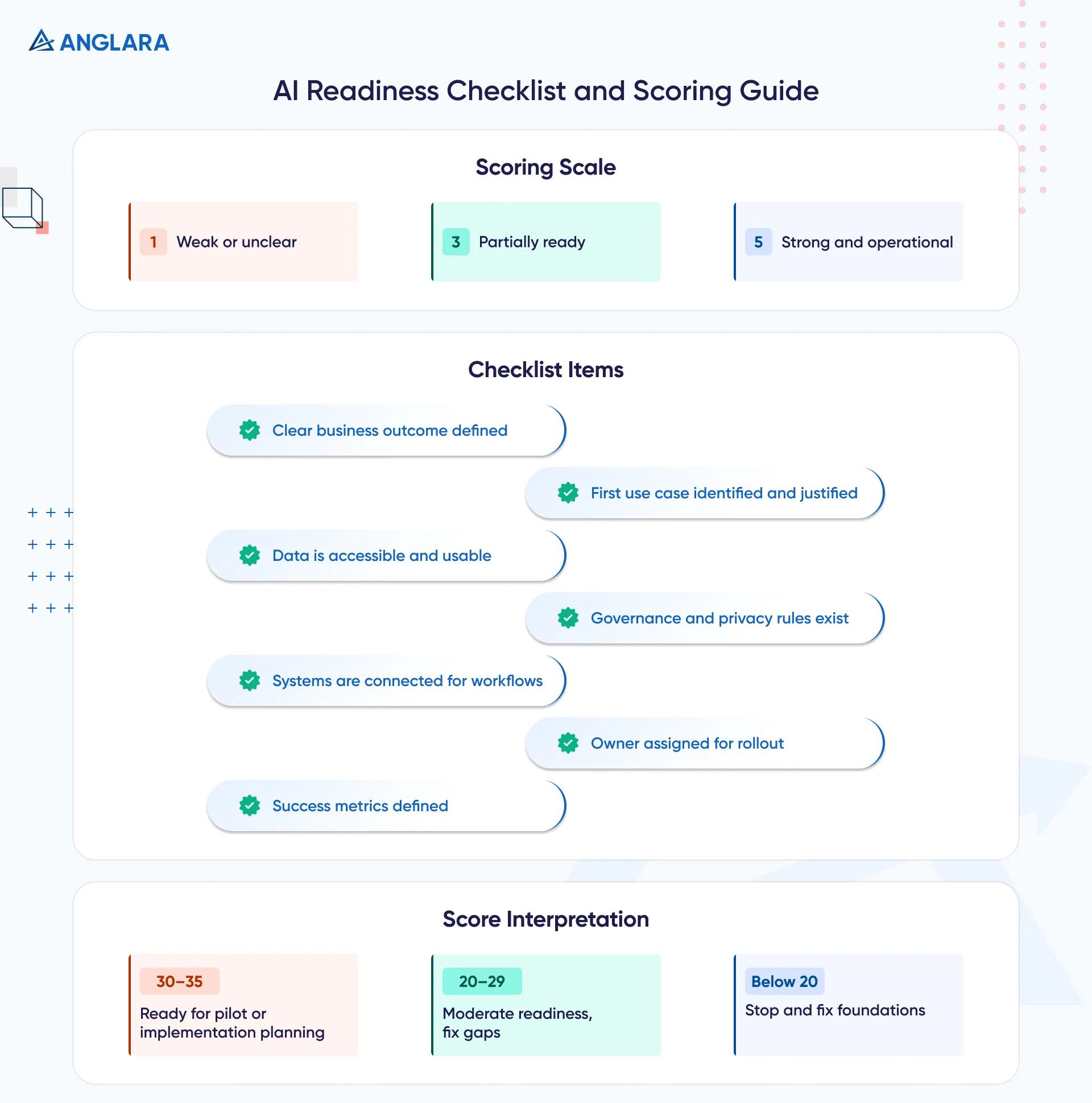

AI Readiness Checklist

Here is a simple scoring approach you can use internally.

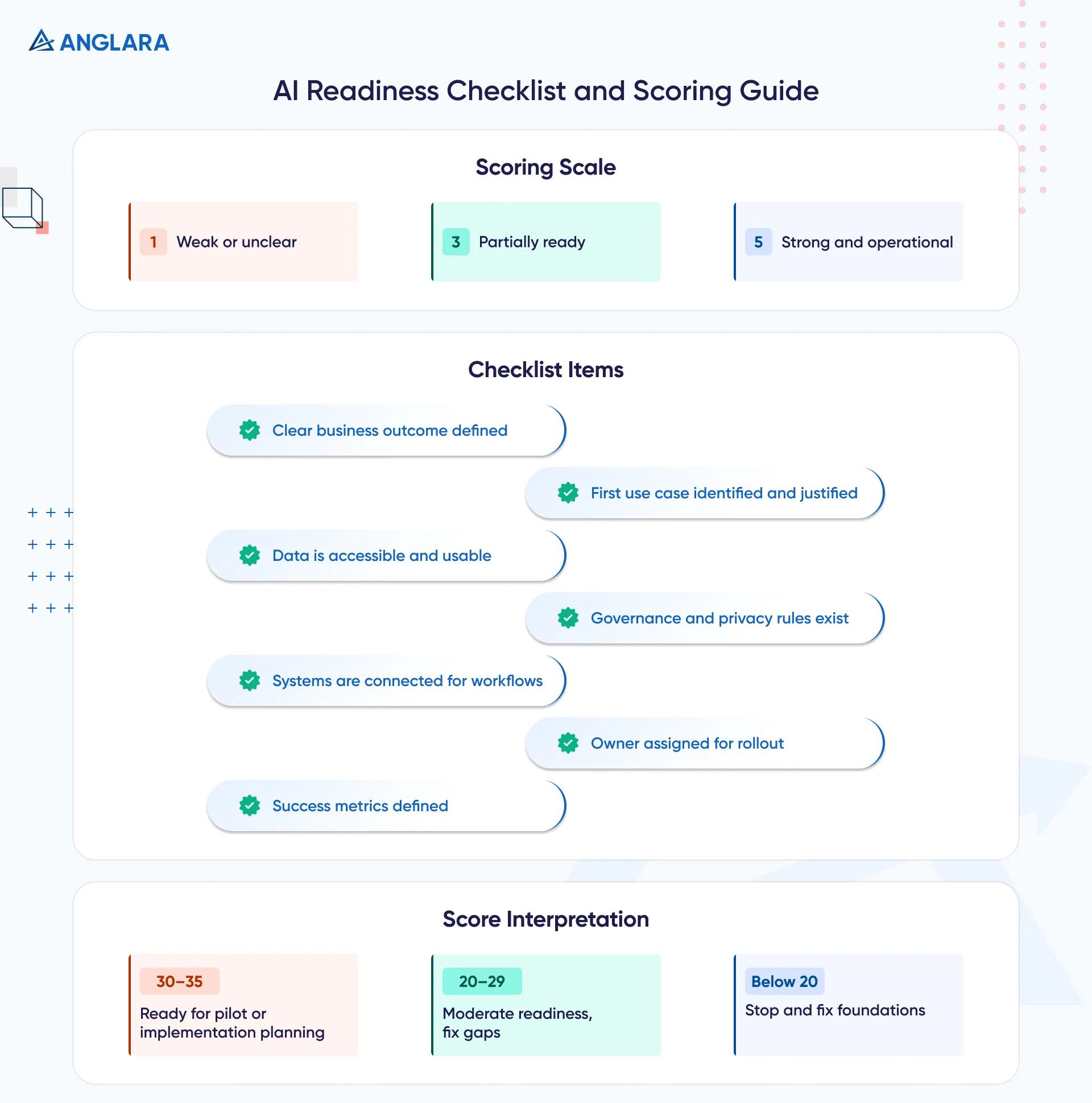

Score each area from 1 to 5:

- 1 = weak / unclear

- 3 = partially ready

- 5 = strong / clearly operational

AI Readiness Checklist

- Do we have a clearly defined business outcome for this AI initiative?

- Do we know which use case should come first and why?

- Is the required data accessible, current, and usable?

- Do we have clear rules for data privacy, vendor use, and governance?

- Are the systems we need connected enough to support the workflow?

- Is there a named owner for rollout and adoption?

- Do we know how success will be measured after launch?

Quick Interpretation

- 30–35: strong readiness for pilot or implementation planning

- 20–29: moderate readiness, but with clear gaps to fix first

- Below 20: stop before implementation and resolve the foundations

When This Is a Good Fit and Not a Good Fit

This Is a Good Fit When:

- leadership wants AI, but needs clarity before funding implementation

- multiple use cases are being discussed and you need prioritization

- teams are worried about security, privacy, or governance

- you want to move from scattered experimentation to a structured roadmap

- you need to identify quick wins without ignoring long-term foundations

This Is Not A Good Fit When:

- the team has already agreed on a validated use case, delivery plan, and rollout owner

- the business only wants a broad AI awareness workshop, not an evaluation

- there is no real sponsor, no timeline, and no intention to act on the findings

An assessment is meant to reduce uncertainty before action. If your business is not yet serious about acting on the output, a shorter strategy session may be enough.

Common Mistakes Teams Make

One common mistake is treating AI readiness as a technology checklist only.

That misses the point.

The strongest sources on this topic consistently show that AI readiness includes strategy, governance, culture, data, and operations, not just tools. (Microsoft Learn)

Other common mistakes include:

- starting with a tool before defining the use case

- assuming existing data is usable without checking quality and access

- ignoring privacy and vendor controls

- underestimating internal adoption and workflow change

- choosing a flashy pilot instead of a measurable one

- failing to define post-launch metrics

This is also why many assessments feel like buzzwords to buyers. Some vendor-led assessments are too generic and too sales-led. A useful assessment should end with specific actions, not just maturity labels. That skepticism shows up in practitioner discussions as well. (Reddit)

What Happens After The Assessment

The assessment is not the end. It should lead to one of three outcomes:

- Proceed now with a prioritized pilot or implementation roadmap

- Fix key blockers first such as governance, data access, or integration issues

- Pause and narrow scope if the selected use case is not ready yet

For most teams, the practical next step is an implementation roadmap. That roadmap should define:

- priority use case

- business owner

- data and systems involved

- delivery approach

- governance requirements

- success metrics

- timeline for pilot and rollout

FAQs

What is an AI readiness assessment?

An AI readiness assessment evaluates how prepared your business is to adopt AI across strategy, data, governance, infrastructure, people, and operational controls. Its goal is to identify strengths, gaps, and next steps before implementation. (Microsoft Learn)

Why do companies need an AI readiness checklist?

Because most AI blockers are foundational, not model-related. A checklist helps teams spot weak areas early, especially around data, governance, skills, and workflow design. (Google Cloud)

How long does an AI readiness assessment take?

It depends on scope, but many business-level assessments can be completed in days to a few weeks. The more systems, teams, and compliance requirements involved, the longer it takes. This timing is an inference based on how assessments from major vendors are structured and how many pillars they review. (Google Cloud)

What should an AI readiness framework include?

At minimum: strategy, data foundations, governance and security, infrastructure, people and culture, use-case prioritization, and post-launch measurement. That pattern is consistent across Microsoft, Google Cloud, Cisco, and NIST-aligned guidance. (Microsoft Learn)

Is AI readiness only for enterprises?

No. Smaller businesses can benefit too, especially if they want to avoid buying tools before they know where AI will create value. The scope just becomes lighter and more practical.

What comes after an AI readiness assessment?

Usually an implementation roadmap, pilot definition, or a short list of issues to fix before implementation. In many cases, the assessment is what turns scattered ideas into a realistic delivery sequence.

Key Takeaways

- AI readiness is about more than tools

- The core areas are strategy, data, governance, infrastructure, people, use-case prioritization, and measurement

- The best first AI project is usually the one with clear value and realistic feasibility

- Dirty workflows, weak data access, and missing governance are common blockers

- A good assessment should end with actions, not vague maturity language

- For many teams, the right next step after assessment is a practical implementation roadmap

Next Steps

If your team wants to use AI but needs a structured way to evaluate priorities, risks, and rollout feasibility, start with an AI readiness assessment before moving into implementation.

For businesses that need outside support, Anglara’s AI Business Consulting Services can help evaluate use cases, clarify readiness gaps, and shape a realistic roadmap.