Think AI implementation starts when you pick a tool? Think again.

Most teams do not struggle because AI lacks potential. They struggle because they move from interest to execution without a clear sequence. An AI implementation roadmap helps you connect business goals, data readiness, governance, rollout decisions, and measurement before time and budget start leaking into the wrong pilot.

An AI implementation roadmap is a phased plan that helps a business move from AI interest to measurable execution. It should define the business problem, review readiness, prioritize one strong first use case, shape a realistic pilot, and clarify how rollout, governance, and success measurement will work. The goal is not to “start using AI fast.” The goal is to implement AI in a way that is useful, manageable, and worth scaling.

Who This Is For

This guide is for:

- founders trying to move from AI curiosity to a real first initiative

- CTOs and product owners planning AI-enabled features or internal tools

- CMOs and RevOps leaders looking at AI for workflows, reporting, lead handling, or support

- operations leaders who need a practical implementation path instead of scattered AI ideas

- teams that already see AI potential but do not yet have a clear sequence, owner, or pilot scope

It is especially useful for businesses that have passed the “should we explore AI?” stage and are now asking a harder question: what should happen first, and what should wait?

What Is An AI Implementation Roadmap

An AI implementation roadmap is a structured plan for how your business will adopt AI in stages.

In simple terms, it answers questions like:

- what problem are we solving first?

- what data and systems are involved?

- who owns the initiative?

- what needs to be ready before rollout?

- how will we test value before scaling?

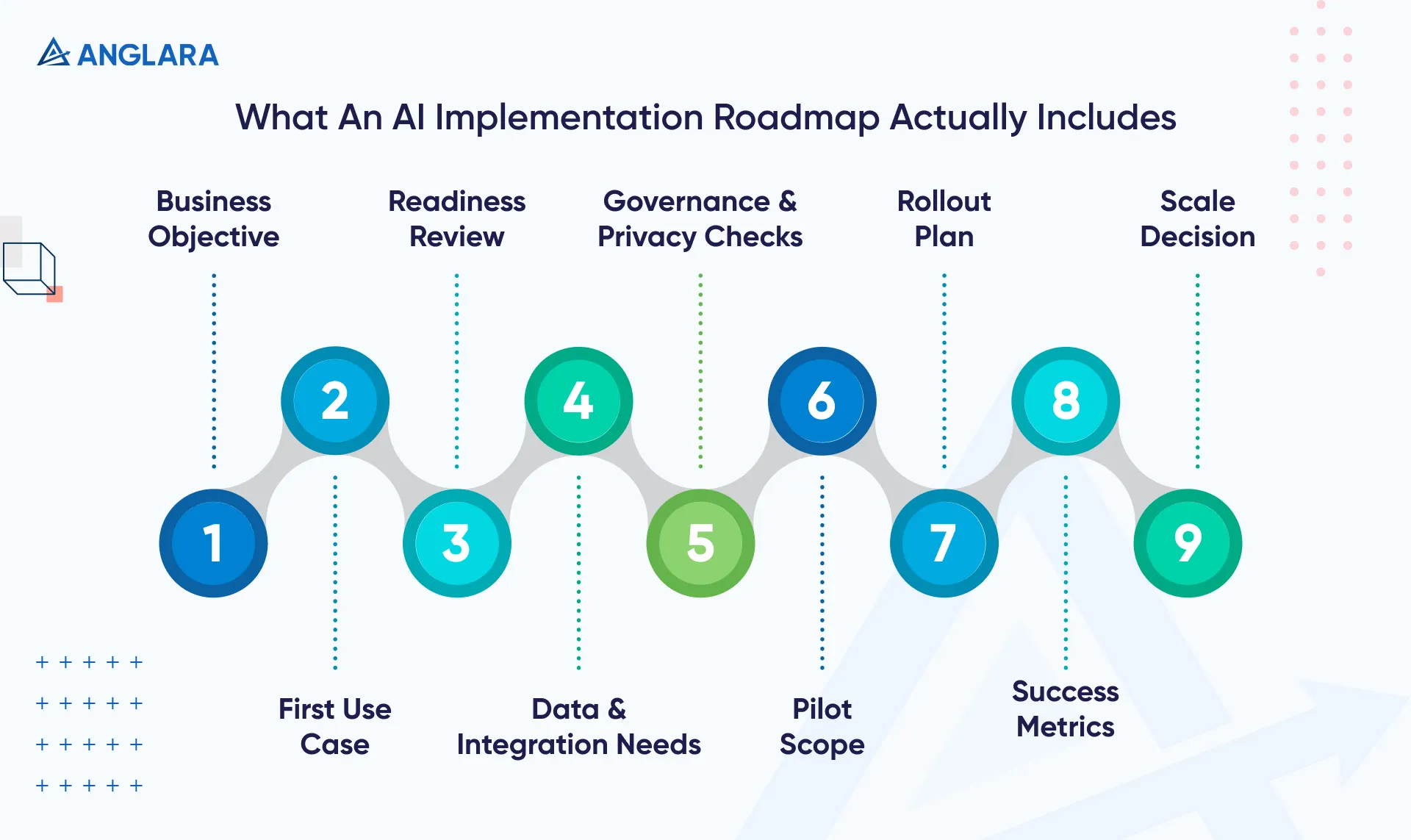

A useful roadmap is not just a timeline. It is a decision framework. It should show:

- the business objective

- the first use case

- readiness and risk review

- required data and integrations

- governance and privacy considerations

- pilot scope

- adoption and training plan

- measurement criteria

- scale decision

If those pieces are missing, what you have is not really a roadmap. It is an intention.

Why Businesses Need A Roadmap Before Implementation

The biggest mistake in AI implementation is rushing from excitement to execution.

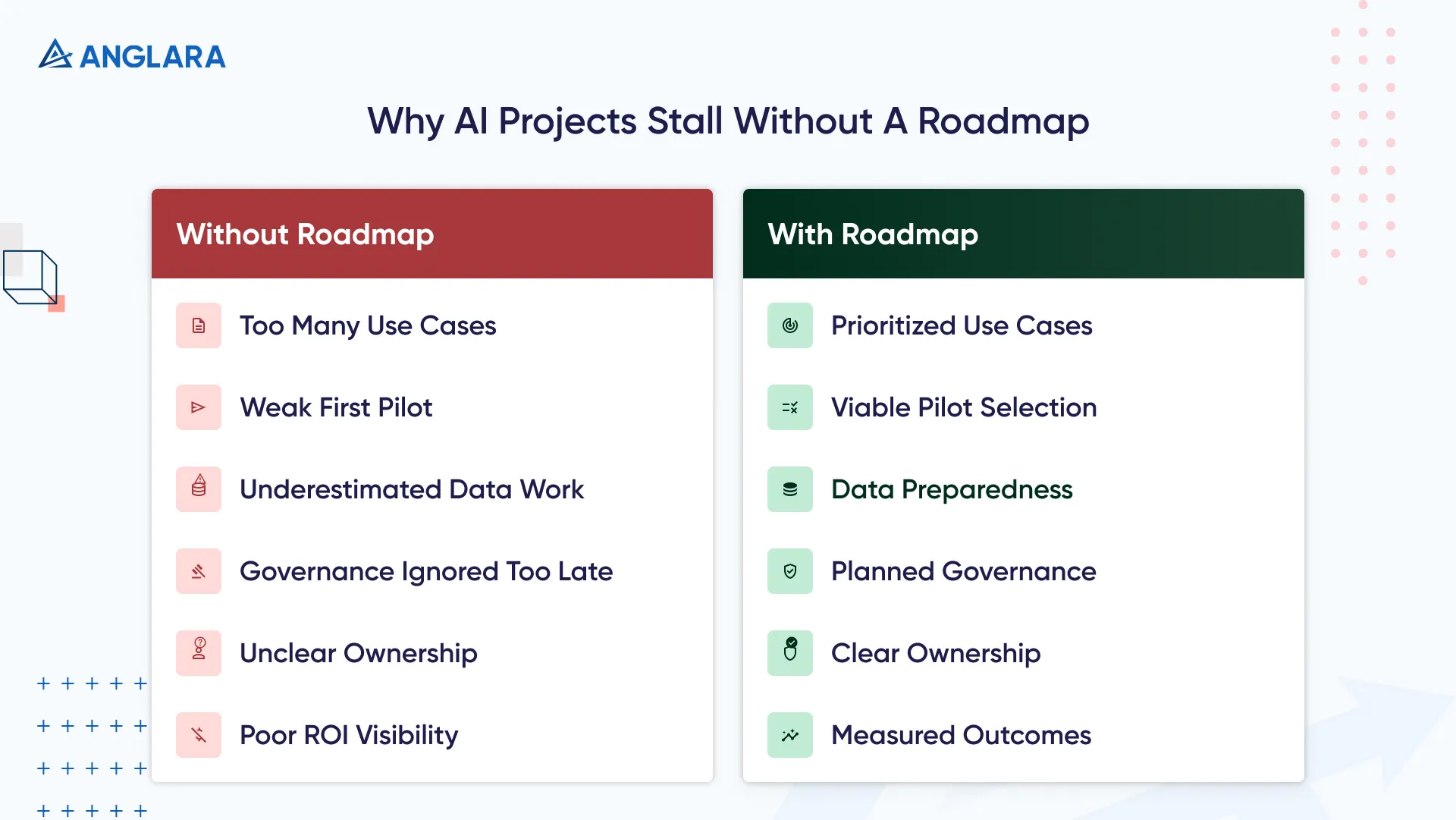

Without a roadmap, teams often:

- pick too many use cases

- choose a flashy but weak first pilot

- underestimate data preparation

- ignore governance until late

- launch something that no team fully owns

- struggle to prove ROI

That is why the roadmap matters. It turns AI from “something we should do” into “something we can execute in phases.”

From a delivery perspective, this is where many teams quietly lose momentum. The issue is usually not that AI is impossible. It is that the first use case was chosen without enough thought about workflows, approvals, or operational ownership. By the time the team realizes that, the pilot already feels slow, expensive, or confusing.

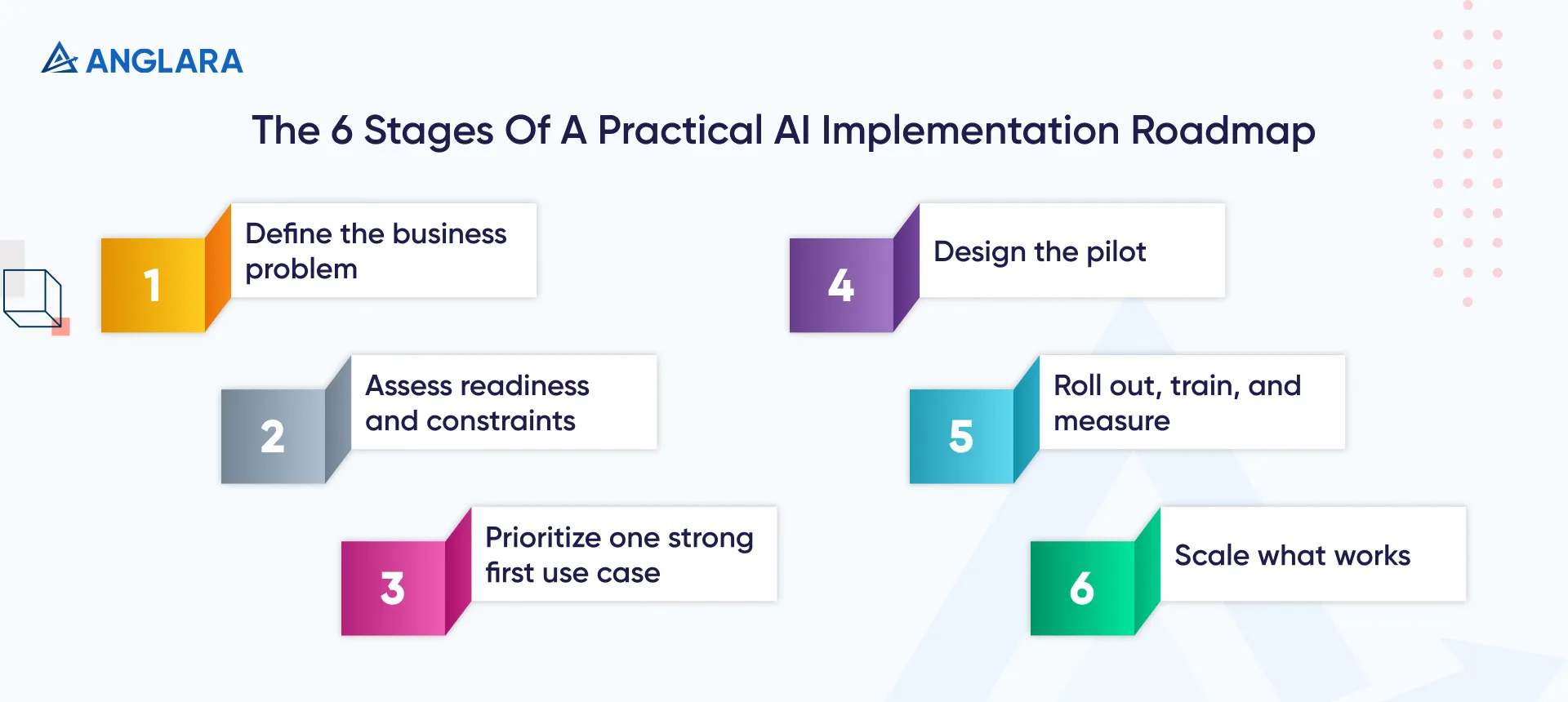

The 6 Stages Of A Practical AI Implementation Roadmap

Stage 1: Define The Business Problem

Start with the business problem, not the model.

Good starting questions include:

- where is time being wasted today?

- where are costs rising?

- which workflow creates delays, errors, or poor customer experience?

- where would better prediction, classification, summarization, or automation make a measurable difference?

Examples:

- customer support teams want to reduce first-response load

- finance teams want faster invoice review or anomaly checks

- marketing teams want AI-assisted reporting or content workflows

- healthcare admin teams want help with documentation or intake routing

If the business problem is vague, the roadmap will become vague too.

At Anglara, this is usually where we would slow the conversation down on purpose. Teams often come in saying they want an AI chatbot, an AI agent, or an automation layer. But that is not the real starting point. The better question is: what friction inside the business is expensive enough, repetitive enough, and clear enough to improve first?

Stage 2: Assess Readiness And Constraints

Before implementation, assess whether the business is actually ready.

Review:

- data availability and quality

- workflow maturity

- system integrations

- privacy and compliance needs

- team ownership

- internal skills

- rollout risks

This stage is where many projects get saved from avoidable failure.

In many implementations, teams underestimate how much of the real work sits around the AI layer rather than inside it. Access to the right system, approval from the right stakeholder, data cleanup, and a simple handoff process often matter more than the choice between two models.

That is also why readiness work should not be treated as a delay. It is often the difference between a pilot that teaches something useful and a pilot that only creates noise.

Stage 3: Prioritize One Strong First Use Case

Do not start with five AI initiatives at once.

A strong first use case usually has:

- clear pain point

- enough data

- moderate delivery complexity

- low to manageable risk

- measurable success criteria

- visible internal value

That often means your first AI project is not the most ambitious one. It is the most executable one.

Examples of stronger first use cases:

- support ticket triage

- internal knowledge assistant

- invoice data extraction

- lead qualification support

- reporting summarization

Examples of weaker first use cases:

- broad enterprise copilots with unclear ownership

- regulated workflows without governance readiness

- fully autonomous customer decisions too early in the journey

What Anglara would do here

If a team brought us six possible AI ideas at once, we would not score them only by excitement or executive interest. We would score them by four things first:

- business value

- data readiness

- implementation complexity

- ease of adoption

That usually narrows the list quickly. In many cases, the best first use case is the one that improves an existing workflow with some human review still in place. That gives the business faster learning, lower risk, and a cleaner path to scale.

Stage 4: Design The Pilot

Once the use case is chosen, define the pilot tightly.

The pilot should answer:

- what is in scope?

- what is out of scope?

- what data is needed?

- which systems connect to it?

- who reviews outputs?

- what metrics define success?

- how long should pilot evaluation run?

A good pilot is:

- narrow enough to finish

- real enough to matter

- measurable enough to evaluate

- safe enough to govern

For example, instead of “deploy AI in customer service,” the pilot could be:

- summarize incoming support tickets

- suggest reply drafts for tier-1 inquiries

- route tickets by intent and urgency

- keep human review in the loop

That is much easier to validate.

One thing teams often miss here is that pilot scope should be intentionally smaller than stakeholder enthusiasm. If the room is excited, the instinct is to pack in more features. In practice, that usually hurts learning. A pilot should prove one meaningful thing well, not try to prove everything at once.

Stage 5: Roll Out, Train, And Measure

Implementation is not finished when the pilot works.

This stage should include:

- user onboarding

- change communication

- clear ownership

- QA review process

- monitoring and incident handling

- KPI tracking

Examples of useful KPIs:

- time saved per task

- response speed improvement

- error reduction

- throughput increase

- deflection rate

- adoption by target users

- downstream revenue or cost effect

If users do not trust the workflow or do not know when to use it, the AI may work technically but still fail operationally.

From a delivery-side view, this is where AI projects stop being “technology work” and become “adoption work.” What often breaks during implementation is not the first demo. It is the handoff into real usage. If training is weak, review rules are unclear, or the team feels the system is unpredictable, adoption stalls even when the pilot looked promising.

Stage 6: Scale What Works

Once the first use case proves value, scale carefully.

Scaling does not just mean adding more users. It may mean:

- increasing volume

- expanding use cases

- integrating additional systems

- formalizing governance

- improving reporting and model operations

- standardizing prompts, review flows, or training data processes

This is where a roadmap becomes a portfolio, not just a single project plan.

A practical lesson here is that scale should be earned, not assumed. If a pilot delivered some value but still creates manual review bottlenecks or governance questions, that does not mean “scale now.” It usually means “stabilize first, then scale.”

A Simple Sample Roadmap For Business Teams

Here is a practical example of what a first implementation roadmap can look like.

Weeks 1–2: Clarify Business Goal And Shortlist Use Cases

- define business outcome

- review workflows

- identify one strong first use case

- confirm executive sponsor and operational owner

Weeks 3–4: Readiness And Pilot Design

- validate data access

- identify integration needs

- define scope and guardrails

- document risks and success metrics

Weeks 5–8: Pilot Build And Controlled Rollout

- configure the workflow

- test with limited users or limited volume

- monitor quality and operational fit

- collect user feedback

Weeks 9–12: Measure And Decide

- compare metrics to baseline

- review governance and adoption gaps

- refine the workflow

- decide whether to scale, revise, or pause

This is a sample planning format, not a fixed universal timeline. Some teams move faster. Others need more time because of system complexity, compliance requirements, or stakeholder count.

When This Is A Good Fit And Not A Good Fit

This Is A Good Fit When:

- your team already sees AI potential but needs a practical implementation path

- you have more than one idea and need prioritization

- you want to reduce risk before scaling

- you need clarity on ownership, pilot scope, and measurement

- you want a business-first plan, not just tool recommendations

This Is Not A Good Fit When:

- the team is still at very early AI awareness level

- no sponsor exists internally

- there is no real use case under discussion yet

- leadership wants results but will not support workflow change, data access, or user adoption work

In those cases, an AI readiness assessment usually comes first.

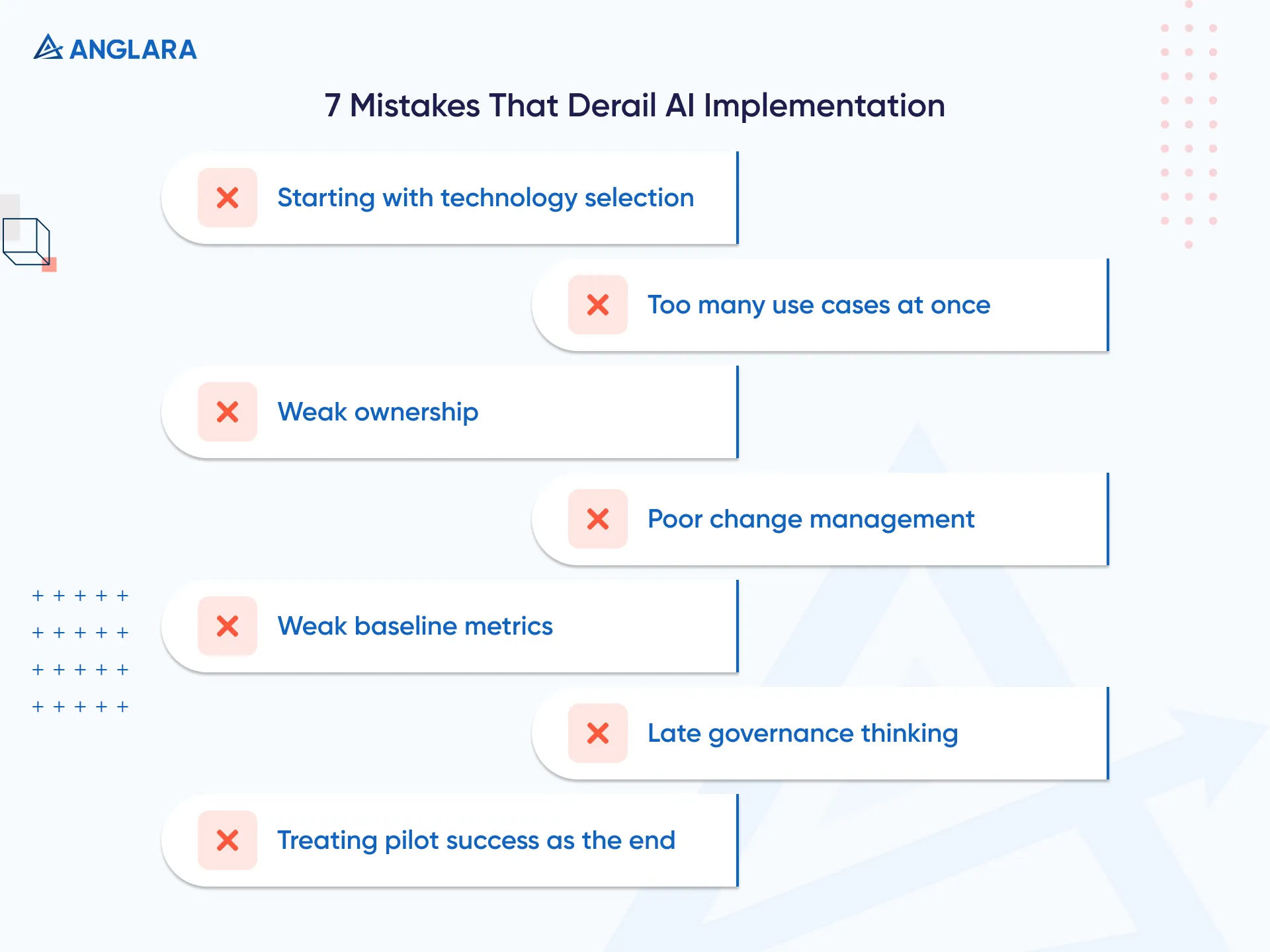

Common Mistakes That Derail AI Implementation

One of the biggest mistakes is assuming the roadmap starts with technology selection.

It usually does not.

Other common mistakes include:

- trying to launch too many use cases at once

- skipping internal ownership

- underestimating change management

- using poor baseline metrics

- ignoring security and privacy until late

- choosing a pilot that is interesting but hard to operationalize

- treating pilot success as the end instead of the beginning

At Anglara, we would be especially careful about one pattern: businesses choosing a first use case because it looks impressive in a demo rather than because it fits the current operating reality. That gap between demo appeal and workflow fit is where many early AI initiatives quietly lose trust.

What Comes After The Roadmap

Once the roadmap is complete, your next step should be obvious.

Usually that means one of three paths:

- proceed with a tightly scoped pilot

- fix blockers before pilot launch

- narrow the use case and re-plan

For many teams, this is also the point where outside consulting becomes useful. Not because the business cannot understand AI, but because implementation usually requires cross-functional sequencing across strategy, systems, data, governance, and adoption.

If your team is already convinced AI can help, the roadmap is what turns that belief into an executable sequence.

FAQ

What is an AI implementation roadmap?

An AI implementation roadmap is a phased plan for adopting AI in a business, from readiness and use-case selection through pilot, rollout, and scale.

Why is an AI roadmap important?

It reduces wasted effort by aligning AI initiatives with business goals, data readiness, governance, ownership, and measurement before rollout begins.

What should an AI implementation roadmap include?

A strong roadmap should include business goals, use-case prioritization, readiness review, data needs, governance, pilot scope, KPIs, ownership, and scale decisions.

What comes first: AI readiness assessment or AI implementation roadmap?

Usually readiness comes first. The roadmap becomes much stronger when data, governance, infrastructure, and use-case fit have already been reviewed.

How many AI use cases should a team start with?

Usually one strong first use case is better than launching several at once.

What is the first step in AI implementation?

Define the business problem and desired outcome before discussing tools or models.

How do you measure success in AI implementation?

Use metrics tied to the workflow, such as time saved, speed, quality, cost reduction, throughput, adoption, or revenue impact.

Key Takeaways

- an AI implementation roadmap should start with business problems, not tool selection

- strong roadmaps connect use-case choice, readiness, pilot design, governance, and measurement

- the best first use case is usually the most executable one, not the flashiest one

- rollout success depends on adoption, training, and KPI tracking, not just build quality

- readiness assessment and roadmap planning work best together

- scaling should happen only after pilot value is proven

Next Steps

If your team already believes AI could help but needs a practical plan, the next move is not random experimentation. It is roadmap planning.

Start with an AI readiness assessment if your foundations still need review, then move into implementation planning with a clearer first use case, tighter pilot scope, and better delivery confidence.

If you want outside support shaping that path, Anglara’s AI consulting work can help define priorities, roadmap phases, and practical rollout direction before implementation effort expands.